Hi

Hope you had a wonderful weekend wherever you may be. Mine was awesome – Hanoi is starting to shake off Mouldy March (turns out the sky is blue behind all that “cloud” – who knew? 😲) – we’re entering one of my favourite parts of the year in Hanoi. If you find yourself planning a trip here – Spring and Autumn are where it’s at, for sure #greattimeforavisit

Anyway, enough weather chat – let’s get into it with the world of AI:

OpenAI’s New o-Series: Reasoning Revolution or Hype? | Product Analysis 🤖

Another new, another new model(s) – this time from OpenAI who have just released their new o3 and o4-mini models. The ‘o’ indicates that this is a reasoner-class model and it seems like special sauce here is that these models can decide when and how to use the various tools they are integrated with (web search, data analytics, image processing, etc). vs just following instructions. There are benchmarks that suggest this is an improvement on previous models and interesting a suggestion that these might actually be cheaper to use than their predecessors in many scenarios — running counter to the typical “better = more expensive” pattern we’ve grown accustomed to in AI.

Few quick extra notes. In the house-keeping/USP-of-each-model front, the model proliferation is becoming somewhat confusing with OpenAI – their interface now offers 8 different models compared to Claude’s 4 and Gemini’s 3 base options – each of which have clear power/speed tradeoffs. This complexity could create downstream issues for users trying to navigate which model to use when. 🤷 Otherwise, the hype – not too long ago, S. Altman was lauding o3 as potential steps toward AGI (the holy grail of artificial intelligence), but the actual significance remains to be seen as users put these new models through their paces in real-world applications. How might these reasoning models reshape what we teach about AI integration in higher education? As tools become more autonomous in deciding when to use different capabilities, what new skills should students develop to effectively collaborate with them? ✨

UAE Pioneers AI Governance: Promise or Dystopia? | Policy Innovation 🌟

🇬🇧 “We’re injecting AI into government”. 🇦🇪 “Cool story. Ours just became sentient”. The UAE has just launched what they’re calling the world’s first integrated “regulatory intelligence ecosystem” – a groundbreaking AI-powered legal framework that’s set to transform how governments create and manage laws. This revolutionary system connects federal and local legislation with judicial rulings and implementation data to create dynamic, responsive regulations rather than static documents gathering dust. The most impressive part? It promises to accelerate the legislative process by up to 70%, while continuously monitoring global developments and automatically identifying gaps in the national legal framework.

I have mixed feelings about this – I think there’s a lot more room for direct civil engagement these days (e.g., I think a blockchain-based direct > representative democratic voting system would be vastly more responsive/engaging) and given government is almost a byword for bureaucracy across the world… the downside sounds straight out of a Black Mirror episode. After all, this sentence “It preserves the national legislative character shaped by the vision of the founding fathers and societal values while embracing the flexibility and speed of AI-driven regulation” is packed full of variables that could rapidly become dystopian “national legislative character”, “vision of the founding fathers”, “societal values” – what happens when ‘societal values’ are interpreted differently across regions? … and there is definitely a bit of dystopian flavour in the news these days after all… will be watching with interest

AI Confidence Gap: Expert Optimism vs Public Skepticism | Research Analysis 🧠

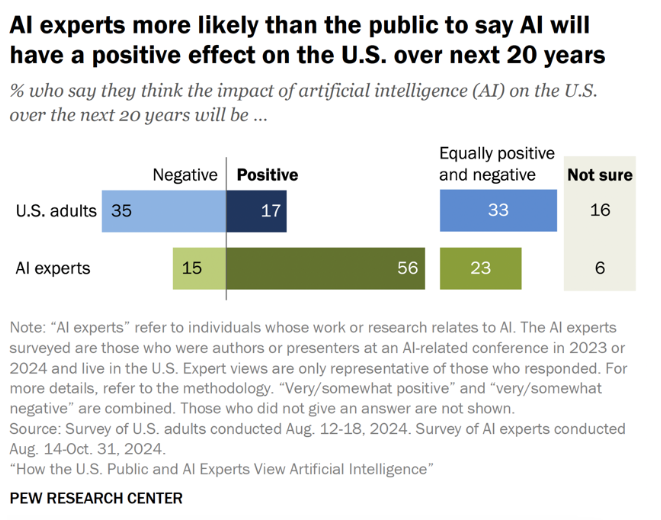

Despite AI becoming increasingly woven into our daily lives (with 82% of people consciously using it according to EY’s recent global study), there’s a striking sentiment divide between experts and the general public. While nearly half of AI specialists report feeling more excited than concerned about AI’s growing role, a mere 11% of the American public shares this optimism, according to Pew Research Center findings. 😮

Super interesting divergence…

What’s causing this confidence gap? Both groups share worries about misinformation (70% of experts and 66% of the public per Pew), data privacy, and algorithmic bias, but the public expresses significantly higher anxiety about job displacement (56% public vs 25% experts) and loss of human connection. The contrast is particularly stark when looking at perceived personal impact – a whopping 76% of experts believe AI will benefit them personally, compared to just 24% of the public.

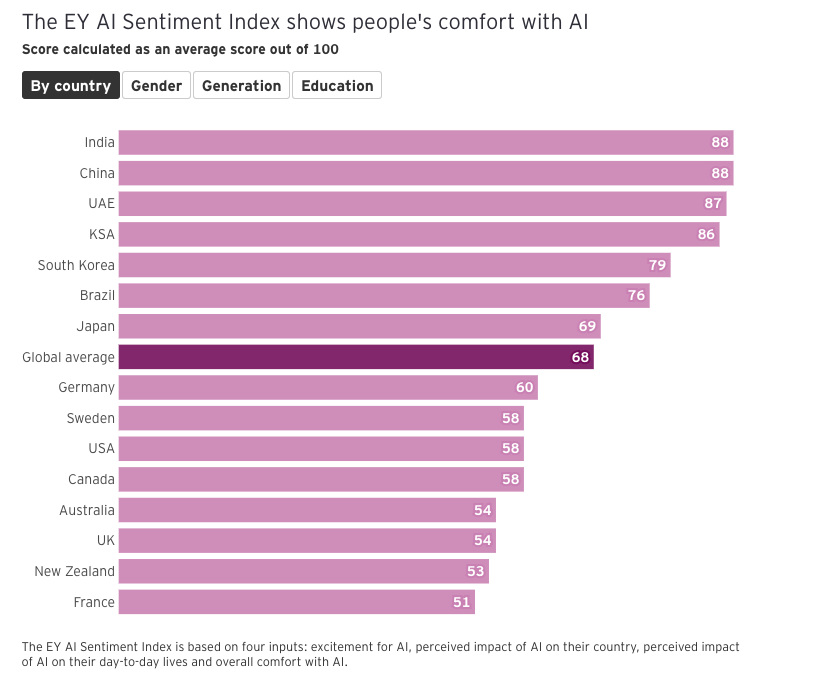

This trust deficit presents a critical challenge for the AI industry moving forward. With 63% of Americans believing they’ll never trust AI with important personal decisions (while experts are split on this question), companies and governments face an uphill battle to build public confidence. Both experts and the public worry more about insufficient regulation than overregulation, yet neither group has much faith in government or corporate leadership to handle AI responsibly (Pew found 62% of the public and 53% of experts lack confidence in the US government to regulate AI effectively). Worth noting there are also interesting national differentials coming out as well:

Be genuinely interested if someone can find an explanatory trendline of that…

The path forward requires more than technological advancement – it demands what EY calls a “license to lead” through practices that actively address ethical concerns, demonstrate value aligned with human needs, and build systems that complement rather than replace human agency. With such a stark divide between expert optimism and public wariness, how can HE bridge this confidence gap? Should courses that demystify AI for non-technical students become a core educational requirement alongside traditional digital literacy? 🤔

Gen AI Evolution: From Code to Companionship | Usage Trends 🤔

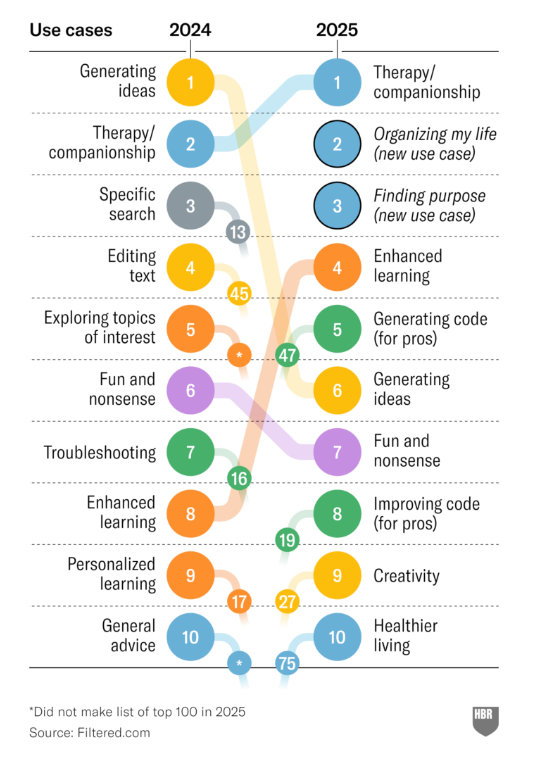

Welcome to the unexpected reality of AI in 2025! According to Harvard Business Review’s latest research, the #1 use case for generative AI is now therapy and companionship, with “organising my life” and “finding purpose” rounding out the top three. This marks a dramatic shift from technical applications to emotional support and personal development, reflecting a fundamental evolution in how we’re integrating these technologies into our lives. As one South African user explained, “Where I’m from, mental healthcare barely exists… large language models are accessible to everyone, and they can help.” 🌟

Top 10 use-cases of AI in 2025 according to HBR

This transformation reveals something profound about our relationship with technology – we’re moving beyond seeing AI as mere productivity tools and increasingly turning to them as partners in our personal growth journeys. As AI increasingly moves into emotional support roles, what ethical frameworks should guide our teaching? When students turn to AI for both academic assistance and personal guidance, how might this transform the traditional mentor-mentee relationship in higher education? 🤔

Kling 2: Visual AI Takes a Leap Forward | Technology Review 🎬

And it is spectacular. The Phase 2.0 update introduces major enhancements to both video and image generation, with better prompt responsiveness, more cinematic visuals, and smoother, more realistic motion. New multimodal tools allow users to easily swap, add, or remove elements in short videos, as well as edit or restyle images using natural language prompts. These upgrades make it easier than ever to create high-quality, stylised visual content with greater control and creative flexibility. Try it out for yourself here.

Previous big kid on the block Veo 2 is now available for anyone in the criminally-underutilised Google AI Studio. Admittedly awesome but for every world-class new AI-first production studio (e.g., Wonder Studios looks amazing – and is that an AI version of the Old Man and the Sea? 😍), you have to ask about what this will mean re. environmental costs when potentially hundreds of millions of people are mass-producing fairly ordinary AI movies… or copyright/IP law, which, did you see both Jack Dorsey and Elon Musk want to get rid of? Anyway, as visual generation reaches new heights, how should universities prepare students in creative fields for a landscape where AI can produce cinema-quality content? What new literacies will graduates need in a world of AI-generated media? #interestingtimes

The evidence is clear: we’ve entered a critical phase in the AI revolution. As reasoning models evolve, regulatory frameworks adapt, and users increasingly turn to AI for emotional support, HE faces both challenge and opportunity. The stark confidence gap between experts and the public isn’t just a technical issue – it’s an educational one. Universities that bridge technical competence with ethical understanding won’t just survive these transformations – they’ll lead them. As content creation accelerates and governance evolves, preparing students for a world where AI literacy is essential becomes our shared responsibility. The AI revolution isn’t approaching – it’s here, waiting for education to catch up. Are we ready? 🧠✨