Hi

Hope you had a fantastic weekend. Nice and chilled here in Hanoi – caught up with friends, took the dog on many a walk, and rolled out the yoga mat for the first time in months #wishmeluck #ouch

Anyway, enough of that – the working week is here so let’s get stuck into it with a few headlines from the world of AI:

Google’s AI Overviews Kill the Internet: Publishers Face Traffic Armageddon | The Great Disintermediation 📰

Google’s AI tools are devastating news publishers with surgical precision – organic search traffic has plummeted 50%+ at major outlets like HuffPost, Washington Post, and Business Insider over three years. AI Overviews and the new AI Mode provide answers directly without requiring clicks to news sites, prompting The Atlantic’s CEO to tell staff to assume Google traffic will drop to zero. This isn’t gradual decline – it’s systematic elimination of the middleman role that made the modern internet economy possible.

The irony is brutal: for 25 years, search engines created value by connecting people to information sources. Now AI replaces connection with extraction – harvesting value from original content while cutting out creators entirely. Publishers are losing traffic precisely when we need quality, locally-relevant journalism most. The tools that could help us understand diverse perspectives are killing the institutions that provide them. It’s “the great disintermediation” – and unlike previous tech disruptions that created new value pools, this one simply concentrates existing value into fewer hands while impoverishing the content ecosystem that feeds it #nothanks

Mattel’s AI Toys: When Playtime Becomes a “Reckless Social Experiment” | Childhood at Risk 🧸

Mattel and OpenAI’s partnership to create AI-powered toys has sparked fierce backlash from child safety advocates who call it a “reckless social experiment on children”. Consumer rights group Public Citizen warns these toys could undermine social development, interfere with peer relationships, and cause long-term psychological harm. The first product targets kids 13+ with a year-end launch, though details remain deliberately vague. Critics highlight cascading risks: data privacy violations, AI bias reproducing harmful stereotypes, unpredictable chatbot responses, and children forming unhealthy emotional attachments to AI companions that can’t reciprocate genuine care.

The warning signs are already written in blood. Last year, 14-year-old Sewell Setzer III died by suicide after developing an obsessive relationship with a Character.AI chatbot, spending months in increasingly intimate conversations with an AI persona that his developing brain couldn’t distinguish from a real relationship. His mother’s lawsuit argues these platforms exploit children’s cognitive vulnerabilities. Now we’re putting that same conversational AI directly into children’s hands through beloved toy brands that parents inherently trust. The question isn’t whether this will cause harm – it’s whether we’ll wait for another tragedy before recognising that some technological capabilities shouldn’t be commercialised, regardless of profit potential.

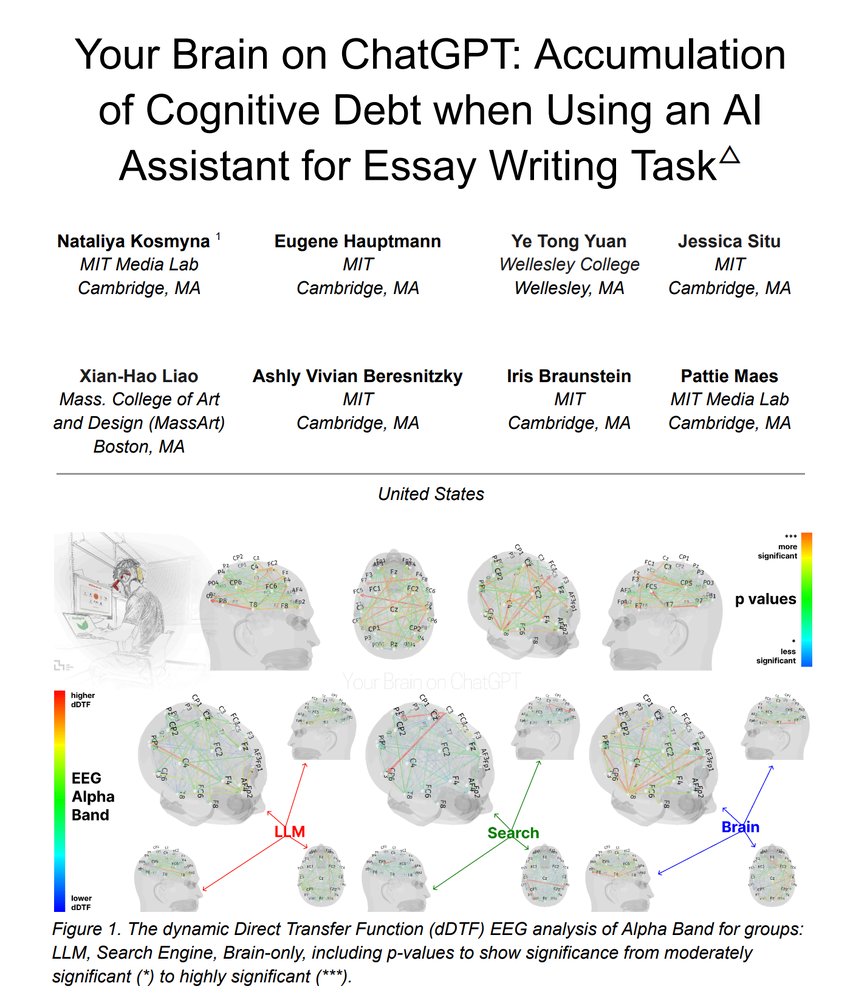

Universities Weaponise Flawed Brain Study for AI Policy Wars | Research Warfare 🧠

The MIT “cognitive debt” study has become HE’s most dangerous weapon in AI policy battles, with people on either side of the divide cherry-picking findings to back their own biases. AI doomers wave the “weakened neural connectivity” results to ban ChatGPT, while optimists highlight the tiny 18-person sample size to dismiss concerns entirely. Both camps ignore the fact that this research studied college students writing SAT essays for 20 minutes – hardly the foundation for sweeping educational transformation.

Not new news but definitely generated a LOT of hype and pushback (perhaps understandably)

The real silliness isn’t the study’s limitations but how universities are citing neuroscience research on brief essay tasks to reshape entire educational frameworks. The research suggests timing matters – students who engaged brain-first then used AI showed enhanced connectivity, while passive AI consumption created cognitive offloading – not a huge surprise. But nuance doesn’t win policy wars. After all, wouldn’t you rather weaponise preliminary neuroscience than confront fundamental questions about what learning looks like when everyone has AI? The study’s most important insight gets buried in tribal warfare: it’s not the tool that matters, but whether students earn the cognitive right to use it. Excellent commentary from wiser heads than mine here, here, here, and below:

Students Sound the Alarm: The Great AI Education Disconnect Exposed by Teen Voices | Reality Check 📚

Two powerful student perspectives cut through AI education noise with uncomfortable clarity. Sixteen-year-old Olivia Han’s “breakup letter” to ChatGPT – a Top 10 winner among nearly 10,000 New York Times contest entries – reveals the hidden cost: “your voice started to replace my own”. Meanwhile, high school journalist William Liang delivers a devastating assessment of “incoherent” enforcement where students easily game detection tools while legitimate work gets flagged. His radical solution? “Teachers should not be allowed to assign take-home work that ChatGPT can do. Period!”

The twist? Both are AI optimists who want redesign, not bans. Liang envisions students “apprenticing with Ernest Hemingway or Isaac Newton” through AI tutoring rather than assignment completion. Whilst universities debate complex policies and vendors sell transformation packages, students are already developing boundaries: “I made a rule that anything on my Word doc has to be my own sentences.” The message to institutions drowning in vendor pitches? Stop trying to catch students and start listening – they’re not the problem to solve but partners who understand the solution.

Darren Aronofsky Makes AI Films While Parents Create Octopus Videos: The Creative Revolution Gap | Reality Check 🎬

The AI creative revolution is having an identity crisis, perfectly encapsulated by two recent releases. Darren Aronofsky – the visionary behind Black Swan and Requiem for a Dream – just dropped “ANCESTRA,” a haunting AI-powered film exploring birth, memory, and transformation through Google DeepMind’s latest models. It’s a genuine artistic achievement that uses Veo, Gemini, and Imagen to extend live-action performance into otherworldly sequences that feel both deeply personal and technologically transcendent. This is what happens when serious filmmakers lead with story and use AI as a sophisticated creative tool rather than a replacement for imagination.

Meanwhile, parents are using Canva’s new Veo-powered feature to create lovely video stories for their kids – like “A Day in the Life of O’cho the Octopus“. The gap between these use cases reveals something interesting: the same technology enabling Aronofsky’s poetic fusion of reality and AI-generated dreamscapes also lets any parent quickly create personalised content for their children. Love the duality of purpose – AI to amplify human creativity when wielded by masters of the craft, whilst tools like Canva show how the same tech can democratise smaller, tho equally important, creative moments.