Hi

Hope you had a great weekend wherever you are.

Been thinking that we’ve been nerding out hard on the “tech” side of the “edTech” equation with these updates recently – sorry about that but then, some of them are pretty mindblowing (oh hi DeepSeek 👋). Happily, there have been no enormous announcements or breakthrough models over the last week, so we tried to take a moment to lean the other way a bit more with this update. Enjoy!

🎓 Mind the Gap: AI’s Educational Impact Report 2025 | Digital Divide Alert

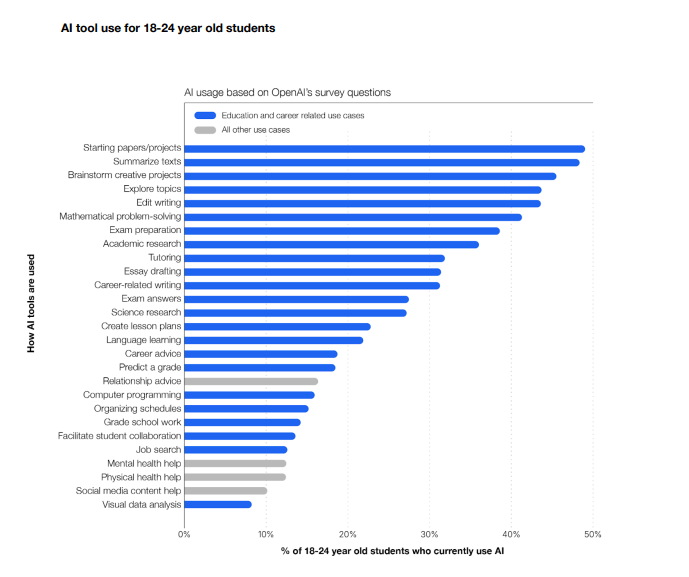

New research from OpenAI and RAND reveals a fascinating but concerning picture of AI’s educational impact in 2025. While over one-third of college students are embracing AI tools like ChatGPT (with particularly high adoption in tech-forward states like California and Virginia), the K-12 landscape tells a more complex story – only about 25% of teachers are using AI, though principals are leading the charge with nearly 60% adoption. Interesting findings on how tools are being used by students as well; to kick-start papers and projects, summarise texts, brainstorm, explore, edit and more.

What’s particularly striking about the findings is the emerging “AI opportunity gap”. While well-resourced institutions rapidly integrate AI across their operations, those in higher-poverty areas face significant adoption barriers. There’s an intriguing twist though – when teachers in high-poverty schools do access AI tools, they use them more intensively than their better-resourced counterparts. This suggests the promise of “an AI tutor for every student” isn’t just aspirational – when given the opportunity, educators embrace AI’s ability to provide personalised support at scale. The question now becomes: how do we ensure these powerful tools reach every classroom? 🌟

Interesting parallels with the recent Anthropic Economic Index

🔍 The AI Emperor’s New Clothes: Higher Ed’s Reality Check 2025 | Tech Analysis

Ever feel that the stories we tell about AI in education sound almost too good to be true? It might be that they are. In an interesting and timely review of the top eight myths of AI, Don’t believe the hype. AI myths and the need for a critical approach in higher education, the authors draw from a wide range of sources to better understand the current state of AI in HE and beyond.

It’s a fresh enough piece that if references AI’s “Sputnik moment” (i.e., DeepSeek AI achieving GPT-4 level performance at a fraction of the cost), noting that this development forces us to rethink not just who leads the AI race, but what AI education really means. With HE enrolments falling, public trust in universities plummeting (from 57% to 36% in just a decade), and costs spiralling increasingly out of control, the message is clear: we need to move beyond the hype and tackle the real challenges of integrating AI into education. The question isn’t whether AI will transform learning – it’s whether we can approach this transformation with the critical thinking and clear vision it demands. What educational future are we really building? 🤔

🧠 Rethinking Critical Thinking: Carnegie Mellon’s AI Workplace Study | Future Skills Report

A fascinating new study from Carnegie Mellon University and Microsoft has uncovered a critical paradox in how we interact with AI tools at work: higher trust in AI correlates with decreased critical thinking even as users perceive it as easier, while stronger self-confidence leads to deeper critical engagement despite feeling more effortful. Through analysing 319 knowledge workers and 936 real-world AI use cases, researchers found that AI is reshaping how we think – shifting cognitive effort from information gathering to verification, from problem-solving to response integration, and from task execution to what they call “task stewardship.” 🔄

This mirrors broader industry patterns we’re seeing – while Anthropic’s recently published Economic Index shows widespread AI adoption for augmentation rather than automation, and RAND’s education study reveals concerning disparities in AI literacy, this research suggests something deeper: the quality of human-AI interaction may matter more than raw adoption rates. The study found that about 60% of workers reported critical thinking was still essential when using AI, but the nature of that thinking has evolved. Task stewardship emerges as a new core competency – the ability to orchestrate AI inputs, verify outputs against domain expertise, and thoughtfully integrate AI-generated content while maintaining human agency. The key question emerging: how do we design AI systems that enhance rather than erode our cognitive capabilities? 🤔

🌟 Breaking AI’s Trust Barrier: Multiple Agents Transform Education | Tech Breakthrough 2025

Hallucinations are annoying. Not going to lie, I’ve had some pretty terse words with Claude when it’s fed me confident lies 🤬. There might be a fix though: don’t just use one AI – use a team of them! By implementing a multi-agent system where different AIs work together to fact-check and refine content, researchers achieved a remarkable 96% reduction in AI hallucinations across 310 test cases. Think of it as creating an AI peer review system, where each agent has a specialised role: one generates content, another reviews it for accuracy, and a third refines and validates the information. 🤖

The implications for the world of work (and HE) are enormous. Imagine having AI teaching assistants that can generate educational content with significantly reduced risk of factual errors, or research assistants that can more reliably summarise academic papers. This is also a nod to a world where we can chain together agents that provide those “Wait, but…” moments that DeepSeek does so well – that “self-doubt” lets you go back and correct yourself – super handy. Combine these with library databases or other locked-down systems and things start getting very interesting… whenever it comes, this paper potentially represents a crucial step toward more trustworthy AI tools in education.

🌟 AI in ANZ Education 2025: From Theory to Transform | Tech Impact Report

The recent 2025 AI in Education Symposium Australia & New Zealand sounds (and looks) like it was an absolute cracker with educators from ~30 universities across Australasia (and beyond) coming together to showcase how they have been meaningfully integrating AI into HE. The diversity of projects – spanning veterinary simulations, cross-cultural AI literacy, and AI-assisted game design – highlighted just how deeply AI has been woven into the pedagogical fabric across Australasia. Huge props to the organisers Danny Liu, Tim Fawns, Russell Butson, and more on what sounds like an excellent event and even bigger thanks for making the recordings and other resources available 👏🙏

Highlight for me – loving the focus on collaborative AI integration rather than technological displacement. The presentations demonstrated this change through concrete examples – Auckland University of Technology‘s research writing initiatives transforming how students approach academic writing, and the The University of Waikato‘s groundbreaking work bridging Western and Chinese AI ecosystems. Against this backdrop of practical innovation, the EweSYD project’s use of AI simulations for veterinary education emerged as a particularly creative example of how AI can enhance hands-on professional training. 🐑

As we’ve seen through these interconnected developments – from RAND’s sobering digital divide findings to Carnegie Mellon’s insights into task stewardship, and from emerging multi-agent solutions to practical implementations across ~30 ANZ institutions – we’re at a crucial juncture in AI’s educational integration. The challenges are clear: ensuring equitable access, maintaining critical engagement, and developing reliable AI systems. But the opportunities are equally compelling, particularly as demonstrated by the innovative approaches showcased at the ANZ AI Symposium.

The path forward seems to lie in what we might call “thoughtful integration” – where AI tools are neither blindly trusted nor dismissed, but carefully woven into educational practice through approaches like task stewardship and multi-agent verification. As institutions like the University of Waikato and Auckland University of Technology demonstrate, success comes not from focusing solely on the technology, but from understanding how to blend AI capabilities with human expertise while maintaining critical thinking and educational equity. The future of AI in education isn’t just about having the right tools – it’s about developing the right frameworks and attitudes for their use. 🎯