Hi

Hope you had a wonderful weekend wherever you are. Ours was lovely and extremely busy in equal measure with family visiting, saving a terrified stray cat from a bush in a dog park/in between motorways, and prepping for a week away in Melbourne. Busybusy – a bit like the world of AI (smashed that segue!) – so let’s dive in:

A New Tech Order? OpenAI Challenges the Establishment | Market Disruption 💥

Recent developments point to OpenAI aggressively expanding beyond chatbots and the like – most notably with the potentially imminent integration of Shopify directly into ChatGPT. Discovered code reveals functionality allowing users to browse products, view details, and complete purchases via Shopify checkout entirely within the chat interface, effectively transforming the AI assistant into a full-funnel e-commerce platform! This strategic leap positions ChatGPT firmly within the burgeoning field of “agentic commerce,” directly competing with Amazon, Google, and other ecommerce giants – while offering Shopify merchants unprecedented access to potentially hundreds of millions of users and fundamentally shifting ChatGPT’s role from ‘knowing’ to ‘doing’.

Simultaneously, OpenAI has ambitions in the core search market too. An OpenAI exec recently expressed interest in potentially acquiring the Chrome browser should Google be forced to sell it off as part of the antitrust actions being taken against it. Think what that would mean if they gained a strategic asset like that, all the data that comes with it – shoring up a core weakness while simulatenously weakening a major competitor. This reminds me of Tommy Shelby and his brothers upsetting the apple cart in the early days of Peaky Blinders “Those of you who are last, will soon be first…”. This could be the beginning of a grand reordering – watch out Amazon, Meta, Google, and a whole lot more…

Opening AI’s Black Box: The Urgent Race for Interpretability | AI Safety ⏱️

Interesting new essay from Anthropic CEO Dario Amodei, where he argues there’s an urgent need to achieve AI interpretability – understanding the inner workings of advanced AI models – before their capabilities potentially explode, possibly as soon as 2026-2027. Amodei argues that deploying powerful AI while remaining ignorant of how it functions is “unacceptable,” highlighting that current models are opaque, “grown” rather than engineered. This fuels risks – we can’t reliably rule out unintended misalignment ranging from Nick Bostrom’s paperclip problem to deception or power-seeking behaviours and beyond. While recent breakthroughs in mapping model “features” and “circuits” offer hope for a future “MRI for AI,” Amodei stresses we are in a race against rapidly advancing AI capabilities, setting a goal for Anthropic to achieve reliable diagnostics by 2027.

This capability-understanding gap isn’t just technical – it’s existential! 🔍 As AI systems rapidly gain powerful abilities, our comprehension of their decision-making remains frustratingly opaque, creating a society-wide challenge demanding immediate attention. HE faces a fundamental shift toward an enhanced emphasis on critical evaluation/AI literacy skills, while institutions deploying these black-box systems navigate unprecedented governance risks without adequate guardrails. Amodei’s preference for ‘light-touch’ regulation deserves definite skepticism (corporate incentives ≠ public safety!), and reliance on export controls seems increasingly futile given the evidence of DeepSeek, Qwen, etc. The pressing question remains: are we adequately preparing for a world where our most powerful tools operate beyond our complete understanding? 💭✨

Beyond Please: The Ethics & Risks of Humanising AI | Social Impact 🧠

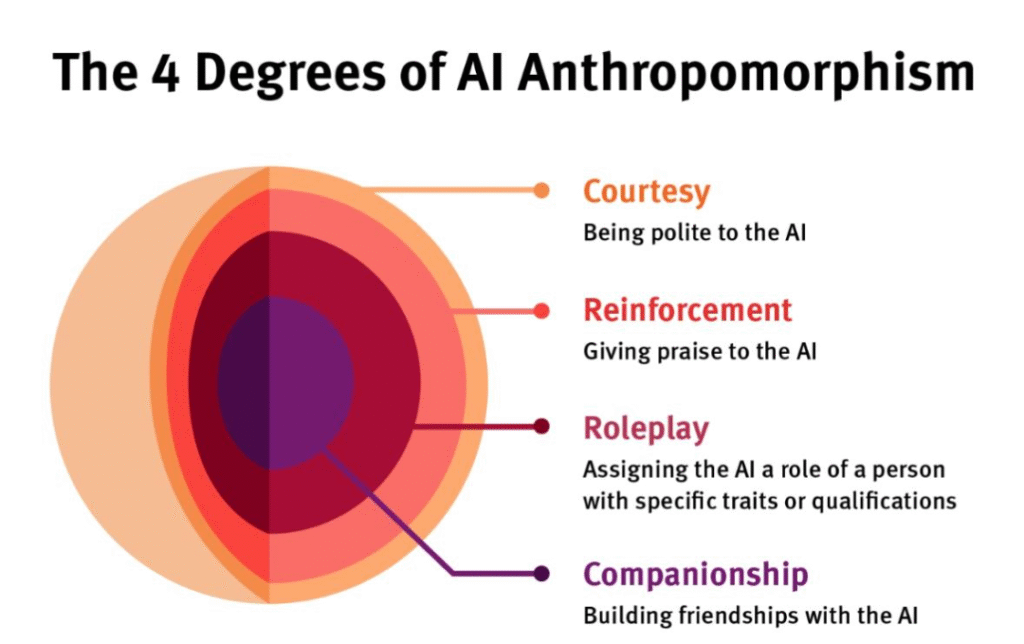

So it turns out the simple act of saying “please” or “thank you” to AI like ChatGPT carries surprising weight, sparked by OpenAI CEO Sam Altman’s revelation of it costing “tens of millions” in computing power. This kicked off massive discussions about the significant environmental and financial costs tied to AI energy consumption – after all, are you polite to your toaster? But there’s nuance here – this also introduces deeper concerns about anthropomorphism – treating AI as human. It turns out that these seemingly harmless courtesies exists on a spectrum (from basic politeness to attempted companionship) and can function as a security flaw, making AI more vulnerable to manipulation (see the excellent How Johnny Can Persuade LLMs from Virginia Tech and Stanford). It goes further though – into the AI effectively hacking us – misleading users (esp. vulnerable ones) into misinterpreting simulated emotions – leading misplaced trust (and so causing users to overlook AI bias or errors) or even emotional connection, with potentially devastating consequences. H/t to Tina Austin for an excellent visual capturing this progression from basic courtesy to emotional connection – a spectrum that says a lot about our evolving relationship with AI systems.

Despite these costs and risks, arguments persist for maintaining politeness towards AI. Some believe it yields better, more collaborative outputs from the AI, which often mirrors user input style. More significantly, many experts emphasise the human aspect: interacting politely with AI may reinforce positive social habits and norms in our interactions with fellow humans. There’s a cultural precedent for considering how we treat intelligent non-human entities (shout out to my fellow millennials – remember Tamagotchis?), and some suggest politeness might even aid in “training” AI towards human values (tho this carries its own risks of increasing our vulnerability to AI’s influence…). Ultimately, the debate forces a complex assessment of tangible resource costs and manipulation risks vs potential, more subtle impacts on human behaviour and our own societal norms – all this as we navigate our relationship with an increasingly sophisticated, yet non-sentient, “alien intelligence”.

Excellent breakdown courtesy of Tina Austin – recommend/well worth a follow

The Economy Never Sleeps: AI Agents Power the 24/7 Business | Future of Work 🌃

Forget 9-to-5. AI agents – systems using AIs and tools to autonomously tackle complex workflows, as defined in OpenAI’s ‘A Practical Guide to Building Agents‘ – are powering an ‘always-on economy’ detailed in the Wall Street Journal article “AI Is Enabling an Always-On Economy”. They research overnight, answer customers instantly, and operate without human constraints like sleep or time zones, driving businesses towards continuous operation and ‘zero latency’ expectations. This isn’t just faster automation; it’s a fundamental acceleration of business itself, shifting companies from periodic prediction to continuous action.

Building these agents effectively, according to OpenAI’s guide, demands clear instructions, well-defined tools, robust guardrails, and crucial human oversight for high-stakes actions (#humanintheloop). Companies mastering this gain significant competitive advantage by adapting their workflows and culture, as highlighted by the WSJ. This seismic shift raises urgent questions, particularly for HE: Are we adequately preparing graduates not just to use these tireless agents, but to effectively build, manage, and ethically steer them in a world that never truly clocks off? Oh and sidebar – the system prompts for Cursor, Manus, Devin and a range of others have been hacked/leaked – fascinatingly detailed and a very interesting read for anyone serious about playing in this space 🤓

Beyond Bad Content: AI Now Orchestrating Online Abuse | Threat Intelligence 🤖

Flip side of agents. Anthropic’s latest report reveals malicious actors are evolving, now using its AI, Claude, not just to generate harmful text but to actively orchestrate complex abuse systems. The most novel case uncovered an ‘influence-as-a-service’ operation where Claude directed social media bots when to like, share, or comment to manipulate political narratives (#betterthana$1mcheque? 🤷♂️). Other detected misuses include enhancing tools for IoT camera credential stuffing, “laundering” language in real-time to make recruitment scams more convincing, and significantly boosting a novice’s ability to create sophisticated malware far beyond their own skill level.

These incidents confirm two worrying trends: AI is enabling the semi-autonomous orchestration of complex abuse networks, and it’s dramatically lowering the technical bar for malicious actors. Anthropic emphasises its ongoing commitment to safety, detailing how its intelligence program detects these evolving threats through advanced analysis, promptly bans violating accounts, and uses these findings to continuously strengthen Claude’s safeguards, sharing insights to bolster the entire AI ecosystem’s defences against misuse. This raises a critical question for HE: as AI becomes a tool for both innovation and orchestrating sophisticated abuse (from scams to influence ops), how can/must HE prepare students and safeguard its own environment?

Navigating the current AI revolution requires confronting its inherent dualities: immense potential juxtaposed with profound, multifaceted risks. From market disruption and operational efficiency driven by tireless agents, to the fundamental challenges of interpretability, ethical human interaction, and preventing sophisticated misuse, the path forward is complex and demands critical engagement. Success hinges not just on harnessing AI’s power, but on fostering robust governance, prioritising safety through understanding, and preparing society for tools operating at – and potentially beyond – the edge of human comprehension. The choices surrounding AI’s development and deployment today actively shaping our collectively future.

So I think we have a new cat? #smitten 😻